How Accurate Is REM/Rate as an Energy Modeling Tool?

Michael Blasnik, in case you don’t know, is a home energy geek’s home energy geek. He’s a building science nerd’s building science nerd. He’s known for analyzing data on home energy efficiency and laying bare some of our most-cherished assumptions about home energy efficiency.

Michael Blasnik, in case you don’t know, is a home energy geek’s home energy geek. He’s a building science nerd’s building science nerd. He’s known for analyzing data on home energy efficiency and laying bare some of our most-cherished assumptions about home energy efficiency.

In 2009, he and Advanced Energy published a report called the Houston Home Energy Efficiency Study (pdf) that’s jam-packed full of good data analysis. There are so many things to write about from that paper that it’s hard to choose one to start with, but the one that’s calling my name today is the part that deals with the accuracy of REM/Rate, the leading software for doing home energy ratings. Some people have the idea that REM/Rate isn’t very accurate because they do the energy model on a house, and the results are different from what the energy bills show.

Is REM/Rate accurate?

Among the items that the Houston study looked at was this issue of REM/Rate’s accuracy, at least in one area (cooling loads in ENERGY STAR homes in a hot/humid climate). Because the study was based on data from more than 226,000 homes built in Houston, Texas (my original hometown!), they focused on cooling loads. They compared the annual cooling load that REM/Rate spit out to the annual cooling load based on the energy bills. Because they couldn’t get the REM/Rate files for all 226,000 homes, though, they narrowed this part of the study down to 10,258 homes.

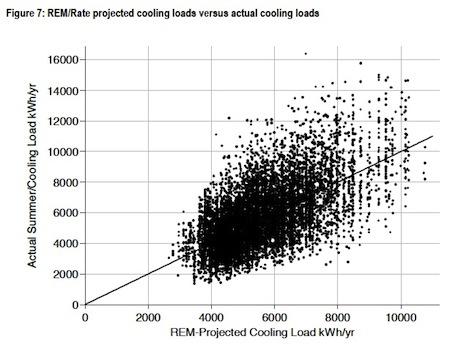

Once they had the data, they plotted them, first in the scatter plot below (from page 41 in the report).

I love this graph! First of all, it’s got a lot of data – 10,258 of them, as a matter of fact. On the horizontal axis is the number that REM/Rate came up with, and on the vertical axis is the cooling load from the energy bills.

Looking at that mass of data, you might think that the line through the middle is the result of a linear regression showing the relationship of the actual to the projected cooling loads. It’s not. That line actually shows the path of a perfect relationship between the actual and projected data. For example, if REM/Rate projects 2000 kWh/yr, the line goes through the point where the actual cooling load is also 2000 kWh/yr. If they had added a best-fit line to the graph, it wouldn’t have been far from the perfect-fit line. That indicates pretty darn good agreement, I’d say.

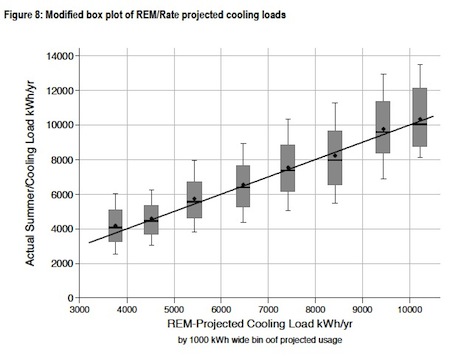

Next, Blasnik condensed the data into a type of box plot, shown below (from page 42 in the report).

The first thing to notice here is, again, the perfect-fit line goes right through the middle. The horizontal black bar shows the median actual energy use for each data bin, and the diamond represents the mean. (The median is the number that half of the data are below and half above. The mean is what we typically think of as the ‘average’ – add all the numbers and divide by the total number of data.) As you can see, both the median and the mean are pretty much right on top of the perfect-fit line.

The other thing you can see in this graph is a representation of how much scatter there is in the data. The grey box shows the range of actual cooling load for the middle 50% of the data. The bars above and below the grey boxes show the range of 80% of the data. This way of showing the data de-emphasizes the outliers that kind of grab your attention in the first graph.

Conclusion

Based on these data, I think it’s clear that REM/Rate appears to do a very good job at modeling cooling loads in ENERGY STAR new homes in a hot/humid climate. The agreement between utility data and REM/Rate’s projected cooling loads could hardly be better. As you can see above, there’s no systematic bias that skews REM/Rate’s cooling load results higher or lower than actual loads. The uncertainties here are all random ones.

I think the reason that some HERS raters and other folks in the industry think REM/Rate isn’t up to the task probably has more to do with the scatter observed above. Yes, an individual home’s actual cooling use may vary significantly from what REM/Rate models, but that must be the result of bad inputs into the model, extra loads that weren’t accounted for, and occupant behavior.

Of course, modeling older homes and heating, water heating, lights, and appliance loads is a different matter, and the divergence between modeled and actual energy consumption may be quite different. According to Blasnik, “I know from experience that many energy modeling tools—REM included—often do a poor job of modeling heating loads in older, leaky, poorly-insulated homes.” But that’s a topic for another post.

Kudos to Michael Blasnik and Advanced Energy for providing the HERS rating community with such a great analysis and for giving us confidence in the main analysis tool that many of us use, at least in the realm to which it applies.

Download the Houston Home Energy Efficiency Study (pdf) here.

If you want to learn how to use REM/Rate and become a certified Home Energy Rater, check out our HERS Rater Training Class. We have one starting on 8 August.

This Post Has 11 Comments

Comments are closed.

My issue with REMRate is that

My issue with REMRate is that it is not a web-based application, it requires Windows and it is not very intuitive. I had to buy Parallels to run REMRate on my Mac, and there is simply a better way to do things today then having to e-mail completed files for QA. On the other hand, you have ICF’s Beacon software and while it may lack some of the REMRate functionality, it better fits my way of doing business.

Finally! Data and curve

Finally! Data and curve-fitting. I don’t see any triple integrals yet, but I figure those can’t be too far behind!

Seriously, though, I enjoyed this article, Allison, and will make a study of the Houston paper.

There’s no doubt, based on what you’ve cited, that REM/Rate is highly accurate, but I would tend to agree with Lance’s more general criticisms. Also, as home energy modeling gains more traction over time, it wouldn’t be too surprising if a few open software initiatives providing similar analysis/modeling/reporting tools begin to emerge.

Lance:

Lance: User friendliness, suitability, and features are definitely important when you’re choosing software for energy modeling. I also run REM/Rate through Parallels on a Mac, so I feel your pain there.

John P.: I’m glad you’re as excited by the data as I am. I hate to disappoint you, though, but there’s no curve fitting here. I did mention it, but there’s none shown above. Maybe I can get some in with the triple integration article for you. There are already plenty of options for energy modeling out there now. Have you seen this page: DOE Building Energy Software Tools Directory? You’re gonna think you died and went to heaven!

When I model a retrofit and

When I model a retrofit and downsize the ac unit and put the ducts in the thermal boundary, my experience with rem is that it way undershoots the actual savings.

So then do I use it and tell the homeowner to ignore the projection because well really do a lot better than what it says? No. Too much information while trying to sell a retrofit. I stopped using Rem for projecting cooling savings just for this reason. Also I almost never sell a retrofit for energy savings, but rather for “let’s do it the way it’s supposed to be done.”

Maybe rem is ok for new homes.

I still think baseload factors are easy to adress and don’t need modeling. Beyond that use the Man JDS to calculate and design the home and project energy costs of comfort.

It may take decades until we get designers to do HVAC right consistently and we see hvac companies install perfectly sized duct and ac systems. Until then I agree that rem is the best chance we have for now for the fray of the professionals.

Just my opinions. Not facts.

True, no curve fitting in

True, no curve fitting in those diagrams, just ideal curves. But you alluded to a best-fit curve in the first case being close to the ideal, and all that empiricist-speak got me excited. Thanks for the link. I will definitely go check it out! 🙂

Christopher

Christopher: You’re absolutely right. When fixing older houses, energy savings is only one of the benefits – comfort, durability, and indoor air quality are often more important to homeowners.

John P.: You’re welcome!

Here’s a link to Dave Robert

Here’s a link to Dave Robert’s slideshow from 2008 RESNET Conference where he describes several previous studies on REM accuracy.

opps… here’s the link:&

opps… here’s the link:

http://bit.ly/q7cqfO

David B.:

David B.: Thanks for the link! Interesting data and results that illustrate where REM/Rate doesn’t work as well.

I just wish the user

I just wish the user interface and report outputs were better. It feels like the reports should be printed on a dot matrix printer, and tear the edges off, remember that?

I’ve just written a blog with

I’ve just written a blog with more information on Michael Blasnik’s analysis of REM/Rate accuracy. Here’s the short version: the software isn’t particularly accurate for older buildings. Read more here:

http://www.greenbuildingadvisor.com/blogs/dept/musings/energy-modeling-isn-t-very-accurate